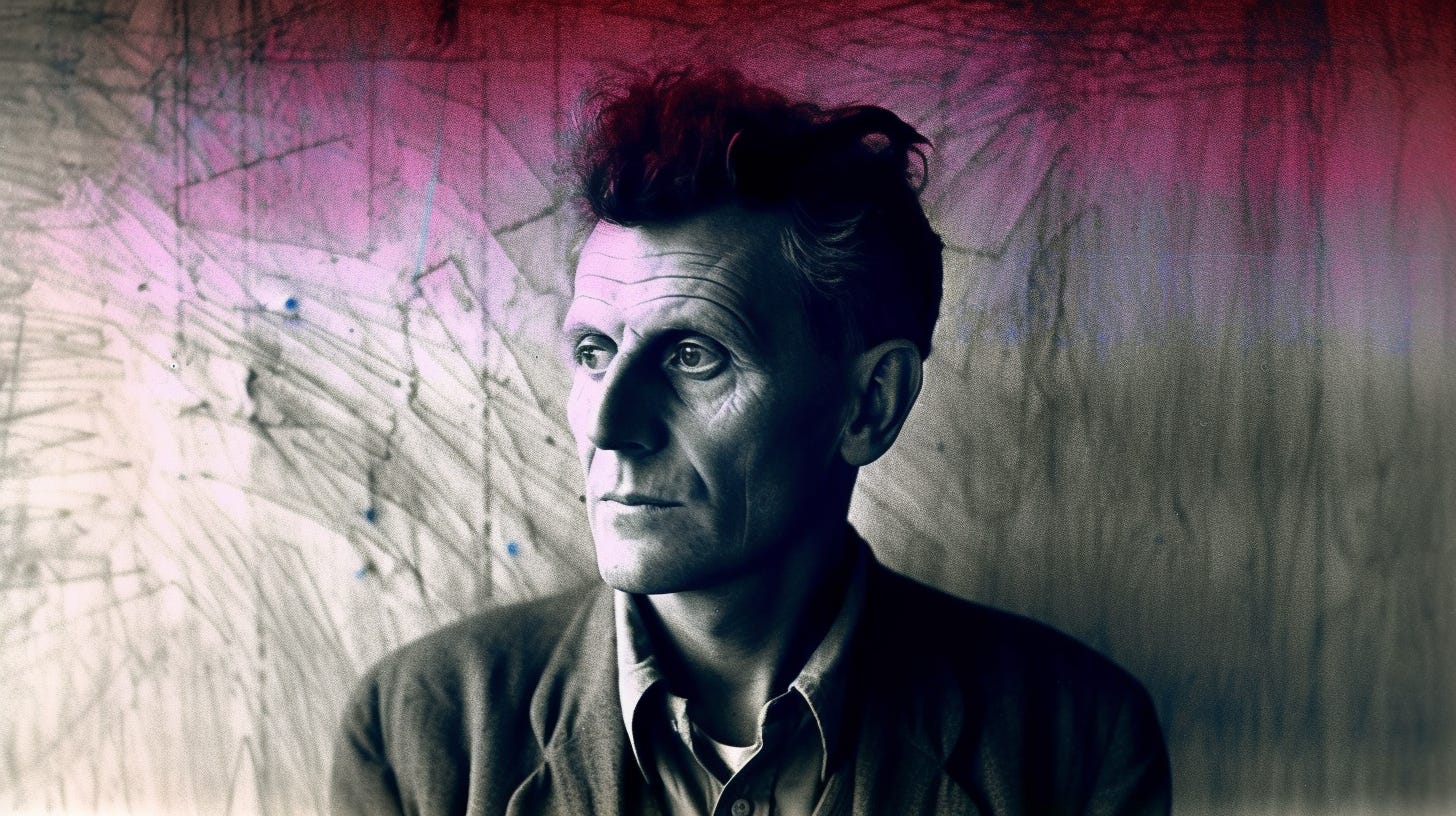

We're all Wittgensteinians now

The philosophical winners from LLMs

The development of Artificial Intelligence is a scientific and engineering project, but it’s also a philosophical one. Lingering debates in the philosophy of mind have the potential to be substantially demystified, if not outright resolved, through the creation of artificial minds that parallel capabilities once thought to be the exclusive province of the human brain.

And since our brain is how we know and interface with the world more generally, understanding how the mind works can shed light on every other corner of philosophy as well, from epistemology to metaethics. My view is thus the exact opposite of Noam Chomsky’s, who argues that the success of Large Language Models is of limited scientific or philosophical import, since such models ultimately reduce to giant inscrutable matrices. On the contrary, the discovery that giant inscrutable matrices can, under the right circumstances, do many things that otherwise require a biological brain is itself a striking empirical datum — one Chomsky chooses to simply dismiss a priori.

Biological brains differ in important ways from artificial neural networks, but the fact that the latter can emulate the capacities of the former really does contribute to human self-understanding. For one, it represents an independent line of evidence that the brain is indeed computational. But that’s just the tip of the iceberg. The success of LLMs may even help settle longstanding debates on the nature of meaning itself.

Yay, Wittgenstein!

There are two major strands of thought in the philosophy of meaning: Meaning as reference, and meaning as use.

The former camp is associated with the mind and body dualism of Rene Descartes. Classical semantic theories, such as the theory of meaning as reference, arise from this intuitive separation of subject and object. On this account, statements like “Joe Biden is 80 years old” have meaning in virtue of a truth-conditional correspondence between the words and the objective world, such as the correspondence between the proper name, “Joe Biden,” and physical person for which the name stands.

In the early 20th century, the logical positivists took this approach to its, well… logical conclusion. Bertrand Russell’s “logical atomism,” for instance, aimed to construct a “logically ideal language” whose minimal viable predicates would correspond to equally atomic facts about the world. Such a molecular approach to language predisposed philosophers of language to treat the meaning of a sentence or proposition as built up from its constituent parts, be they individual words or purely logical objects like the existential quantifier. As Wittgenstein put it in the Tractatus, “a proposition is a propositional sign in its projective relation to the world.”

The embrace of meaning as reference quickly led to some strange places. The logical positivist A. J. Ayer, for instance, concluded that sentences such as “Charity is good” are literally meaningless, since “goodness” lacks a clear referent in the external world for purposes of verification. Moral and aesthetic propositions are thus purely emotive, akin to saying “Yay charity!” Post-structuralists took this a step further by observing that the relationship between the sign and the signified (i.e. the arbitrary letters and phonemes that make up a word and the thing in itself) is often unstable, if not inescapably self-referential. Deconstructionism and skepticism about meaning per se followed suit. Fearing nihilism, others tried to rescue meaning by positing an ontological reality to natural kinds. Perhaps the meaning of the word “chair,” say, survives via its correspondence to the universal, Platonic chair.

The tendency for reference theories of meaning to teeter on a knife edge between skepticism and Platonism eventually led philosophers to take a different tack. This included Wittgenstein, who realized the impossibility of the positivist project later in life. His posthumous book, Philosophical Investigations, thus presented a “use” theory of meaning in its stead.

In a use theory of meaning, the pragmatics of speech come prior to the semantics. Words and propositions have meaning insofar as they do something. For Wittgenstein, this meant making a valid move in a language game; a game which arises within the holistic context of other language users and their social practices.

In turn, rather than treat words as the atomic objects of meaning, meaning more typically resides in full sentences. As the philosopher Joseph Heath notes in Following the Rules, this is an essentially Kantian insight, albeit updated to the linguistic turn:

The dramatic conceptual revolution initiated by Kant's Critique of Pure Reason begins with the suggestion that words and concepts may not be the appropriate explanatory primitive. It may be that whole sentences (i.e. judgements) are the primary bearers of meaning, and that the meaning of words is derived from the contribution they make to the meaning of sentences in which they occur. … Sentences are, after all, the basic unit we use in order to do something with language—to make an assertion, give an order, or ask a question. It is not hard to see how social practices could provide rules for the use of expressions in this way (and thus, how the norms implicit in these practices could confer meaning on such expressions).

On this account, the meaning of a sentence isn’t built up from its constituent parts, but rather the meaning of words is inferred down from the use and context of entire sentences. This is why, when learning a new word, we often ask for it to be used in a sentence, and can even make perfectly appropriate use of words that we struggle to define when put on the spot. Common linguistic errors, such as saying “for all intensive purposes” rather than “for all intents and purposes,” arise for similar reasons: we grok the meaning of a phrase from its use, not from the meaning of the discrete words and the rules for combining them.

I see the success of LLMs as vindicating the use theory of meaning, especially when contrasted with the failure of symbolic approaches to natural language processing. Transformer architectures were the critical breakthrough precisely because they provided an attention mechanism for conditioning on the contexts of an input sequence, allowing a model to infer meaning from full sentences instead of one word at a time. Frege thus had it right over 150 years ago when he said to “never ask for the meaning of a word in isolation, but only in the context of a proposition.”

In particular, LLMs lend credence to a specific pragmatist theory of meaning known as “semantic inferentialism,” most commonly associated with Hegel, Wilfred Sellars and Robert Brandom. Inferentialism argues that expressions gain their meaning from their inferential relations with other expressions, as inferences are a primitive kind of “doing.” From “Pumpkin is a cat,” for instance, one is entitled to infer that Pumpkin is a mammal. However, from “Pumpkin is a mammal,” one is not entitled to infer that Pumpkin is a cat. This inferential asymmetry between cat and mammal is what underlies the classic problem of universals, only now understood in purely pragmatic, post-metaphysical terms.

Inferentialism makes sense of LLMs as extracting semantic content from the statistical (read: inferential) patterns in its pre-training data. As the linguist J.R. Firth famously put it, “You shall know a word by the company it keeps” — a principle now made concrete through the machine learning concept of text embeddings.

Boo, Gary Marcus!

As today’s preeminent AI skeptic, the above discussion should help put Gary Marcus’s obsession with compositionality into perspective. The principle of compositionality states that "the meaning of a complex expression is a function of the meanings of its constituents and the way they are combined.” According to Marcus, "Large Language Models don't directly implement compositionality — at their peril.” But if Wittgenstein and the inferential pragmatists are right, neither does the human mind. The dogma that models of natural language must have the same sort of compositionality found in programming and mathematics is thus little more than a repeat of the same Cartesian fallacy committed by the logical positivists nearly a century ago.

Of course, compositionality has many obvious benefits for interpretation, such as semantic stability. Yet natural languages are nothing like programming languages. Rather, they are products of cultural evolution, and derive their semantic content from ever changing norms and social practices. In other words, they’re unstable by design.

Consider the phenomenon of vague predicates as captured in the classic Sorites Paradox: if a heap of sand is reduced by a single grain at a time, at what exact point does it cease to be considered a heap? Wittgenstein considered vagueness to be a pervasive and unshakeable feature of natural language. Indeed, versions of the Sorites Paradox lie behind many of our thorniest philosophical debates, such as the debate over when a fetus becomes a person. While we might wish to define a clear cut-off in the law, when it comes to the deeper metaphysical question, there is simply no fact of the matter. Rather, such debates merely serve to demonstrate the limitations of ordinary language.

As ordinary language users, humans make do with fuzzy concepts every day. Deep learning models capture this conceptual fuzziness in the notion of a latent space. Within a latent space, a chair can be interpolated into a table, as shown in the image above. The transition is completely smooth and differentiable, which raises the question: at what point does the chair cease being a chair and become a table?

I submit that there is no fact of the matter here, either. A realistic model of natural language must, therefore, accommodate a similar conceptual fuzziness. Fortunately, neural networks seem more than up to the task. It’s as if our brain works on similar principles!

That the brain is computational? Or that computational things can come very close to mimicking what the brain does along some metrics? (Since I think the brain is computational, I don't have a dog in this fight. But even so...)

Maybe it would be fruitful to think about prompts as a theory of meaning? We see prompts all day and this results in various thoughts and reactions. Prompts also trigger various reactions from machines.

Much like viruses, prompts co-evolve with hosts. A prompt is meaningless without a host, but which host it gets paired with is a matter of circumstance.

Until recently, machine prompts (commands or search queries) were fairly distinct from human prompts, even if they use the same words sometimes. But we can learn to understand machine prompts, and some machines are getting increasingly better at understanding human prompts. "Prompt engineering" is fairly close to saying what it is you want.

(This is fairly similar to the concept of memes, but perhaps "prompt" is a better word?)