Polluting the agentic commons

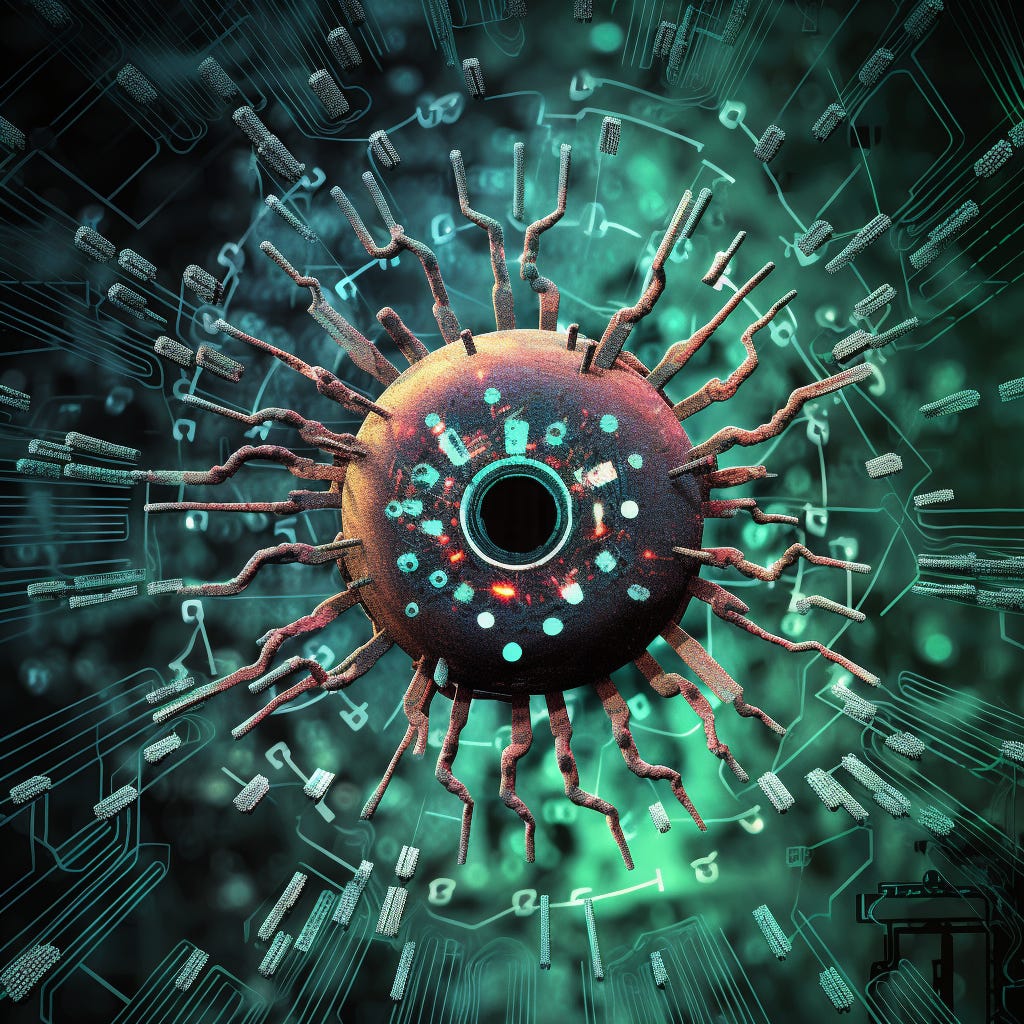

What happens when GPT agents go viral?

The risks from developing superintelligent AI are potentially existential, though hard to visualize. A better approach to communicating the risk is to illustrate the dangers from existing systems, and to then imagine how those dangers will increase as AIs become steadily more capable.

An AGI could spontaneously develop harmful goals and subgoals, or a human could simply ask an obedient AGI to do things that are harmful. This second bucket requires many fewer assumptions about arcane results in decision theory. And even if those arcane results are where the real x-risk lies, it’s easier to build an intuition for the risks by working from the bottom-up, as scenarios in which “AGI gets an upgrade and escapes the lab” require a conceptual leap that’s easy to get distracted by.

After all, it didn't take long after GPT models became widely available for someone to build an autonomous agent literally called "ChaosGPT." We don't need to speculate about emergent utility functions. People will choose to unleash harmful agents just because.

The current trend is for these models to become smaller and smaller to the point where they will soon run locally on a smartphone. Once a GPT agent is compact enough to run on a personal computer without you noticing, it’s inevitable that it will be used to wreak havoc just like any other kind of malware. Let’s call these evil GPTs “mal-bots.” Now imagine the following:

A spurned lover gives a mal-bot a profile of his ex: photos, name, location, worst fears, food allergies, etc.

The bot then has one mission: find ways to terrorize the ex while avoiding detection and making copies of itself.

The ex's phone rings off the hook with vaguely synthetic voices that threaten to kill her dog. Food containing allergens is constantly delivered to her home. Dozens of credit card applications are applied for under her name.

She moves and changes her number, but the mal-bot deduces her new location from the contrails in an Instagram selfie.

The man eventually regrets what he's done but can't make it stop. The mal-bot is quietly running on thousands of computers around the world, coordinating with its own copies through cryptic posts from an anon account.

Seeking revenge, she gives in and downloads a mal-bot of her own.

Now solve for the equilibrium.

This scenario doesn't require superintelligence. These capabilities mostly already exist. GPTs can be used as an interface for any kind of programming or operating system. That means an autonomous mal-bot, packaged to avoid detection, could also take control of your computer while you sleep, and do things like search for sensitive files in a way that would normally require human-like common sense reasoning.

It only takes a small minority of people using AI in this way to create what one could call an “agentic tragedy of the commons.” The next stage is to simply imagine how much worse it will get as the models become more and more capable and harder and harder to contain.

The concept of a security mindset doesn't do it justice.